Overview

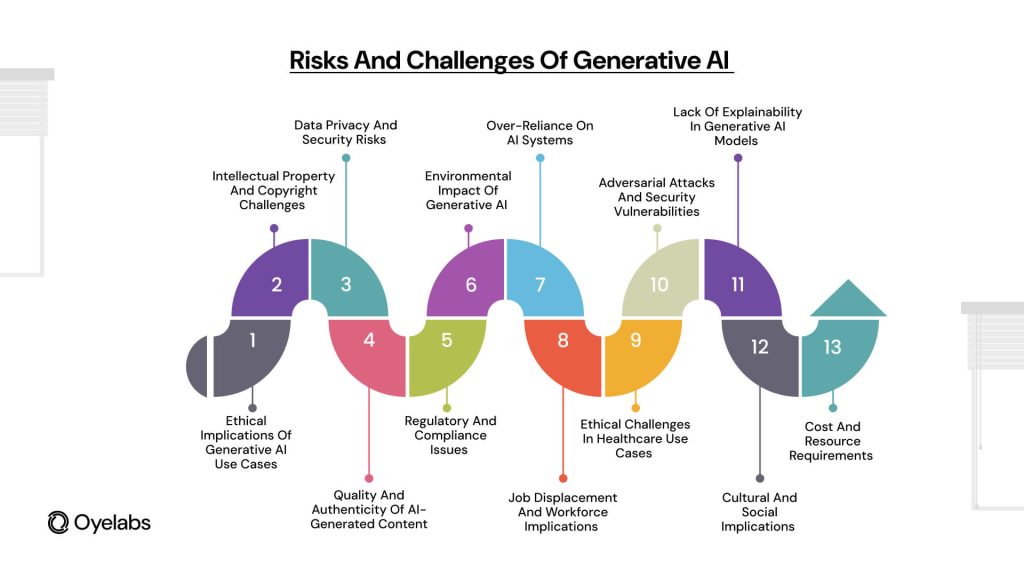

As generative AI continues to evolve, such as Stable Diffusion, content creation is being reshaped through automation, personalization, and enhanced creativity. However, this progress brings forth pressing ethical challenges such as misinformation, fairness concerns, and security threats.

According to a 2023 report by the MIT Technology Review, a vast majority of AI-driven companies have expressed concerns about ethical risks. These statistics underscore the urgency of addressing AI-related ethical concerns.

The Role of AI Ethics in Today’s World

The concept of AI ethics revolves around the rules and principles governing the fair and accountable use of artificial intelligence. In the absence of ethical considerations, AI models may exacerbate biases, spread misinformation, and compromise privacy.

A recent Stanford AI ethics report found that some AI models demonstrate significant discriminatory tendencies, leading to biased law enforcement practices. Implementing solutions to these challenges is crucial for maintaining public trust in AI.

Bias in Generative AI Models

A major issue with AI-generated content is bias. Due to their reliance on extensive datasets, they often reflect the historical biases present in the data.

The Alan Turing Institute’s latest findings Generative AI raises serious ethical concerns revealed that image generation models tend to create biased outputs, such as depicting men in leadership roles more frequently than women.

To mitigate these biases, organizations should conduct fairness audits, integrate ethical AI assessment tools, and regularly monitor AI-generated outputs.

Misinformation and Deepfakes

The spread of AI-generated disinformation is a growing problem, threatening the authenticity of digital content.

For example, during the 2024 U.S. elections, AI-generated deepfakes sparked widespread misinformation concerns. A report by the Pew Research Center, over half of the population fears AI’s role in misinformation.

To Misinformation and deepfakes address this issue, organizations should invest in AI detection tools, educate users on spotting deepfakes, and develop public awareness campaigns.

How AI Poses Risks to Data Privacy

Protecting user data is a critical challenge in AI development. Training data for AI may contain sensitive information, potentially exposing personal user details.

Recent EU AI solutions by Oyelabs findings found that many AI-driven businesses have weak compliance measures.

To protect user rights, companies should develop privacy-first AI models, minimize data retention risks, and regularly audit AI systems for privacy risks.

Final Thoughts

Navigating AI ethics is crucial for responsible innovation. Ensuring data privacy and transparency, companies should integrate AI ethics into their strategies.

With the rapid growth of AI capabilities, organizations need to collaborate with policymakers. By embedding ethics into AI development from the outset, AI can be harnessed as a force for good.

Comments on “The Ethical Challenges of Generative AI: A Comprehensive Guide”